AI People: Long-Term Memory

Have you ever noticed NPCs forgetting what they were doing or the details of your recent conversations? Not anymore! We’ve supercharged NPC memory—think of it as giving them a big dose of ginkgo biloba.

Read more

Have you ever noticed NPCs forgetting what they were doing or the details of your recent conversations? Not anymore! We’ve supercharged NPC memory—think of it as giving them a big dose of ginkgo biloba.

Read more

Key Findings: Large Language Models (LLMs) exhibit significant limitations in handling sequentially dependent operations. Our simple word-swap experiment reveals that most models struggle to perform correctly beyond two consecutive word swap operations, highlighting a critical weakness in their sequential reasoning.

Read more

We are glad to announce that our paper “Beyond Prompts: Dynamic Conversational Benchmarking of Large Language Models” has been accepted to NeurIPS 2024, where we will have the opportunity to share our work and knowledge in relation to Long-Term Memory.

Read more

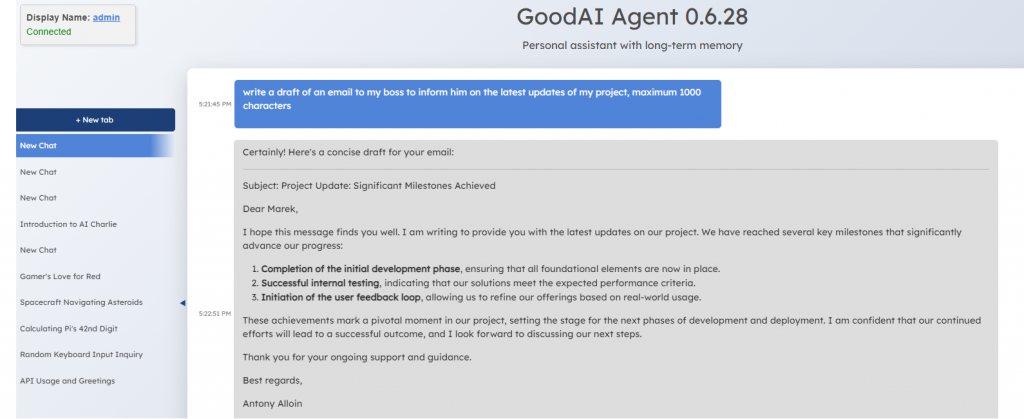

We are announcing major updates for Charlie Mnemonic, your AI assistant with Long-Term Memory that’s getting smarter and more capable every day. We’ve been working hard to integrate new features and improve existing ones, and we are excited to share.

Read more

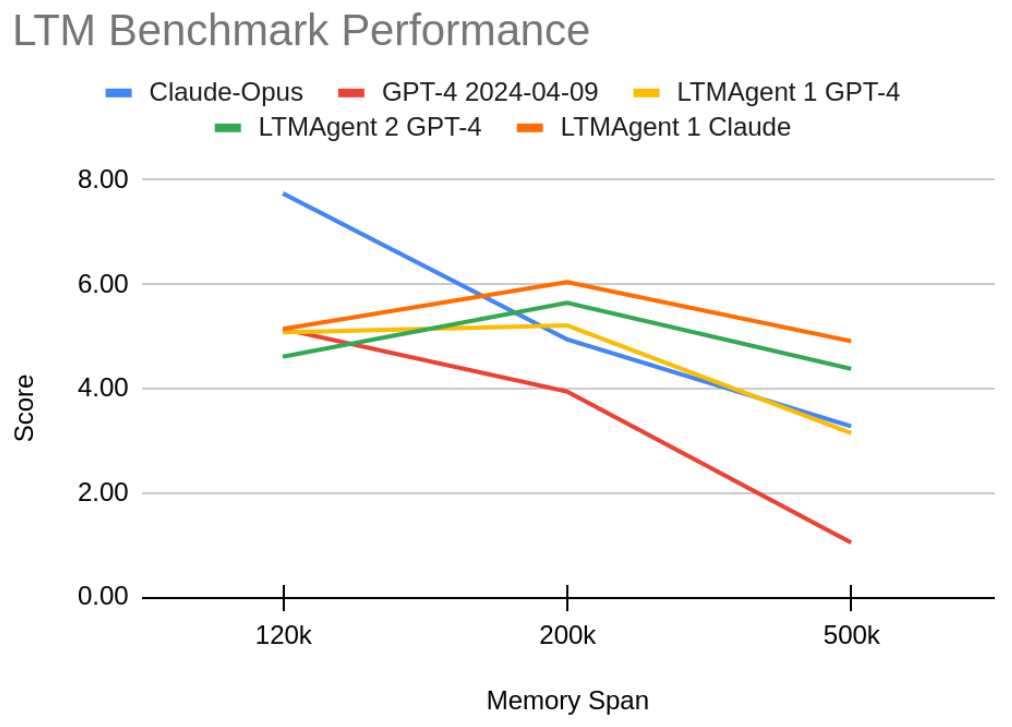

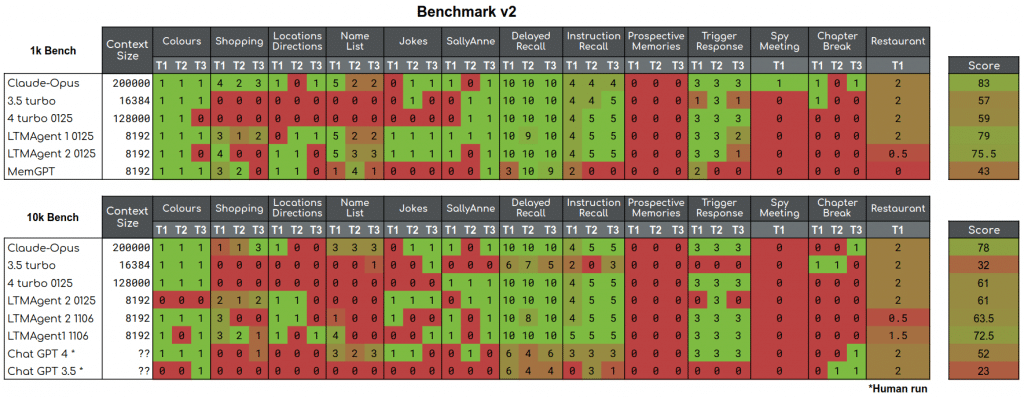

A Standardization Release: The main purpose of the GoodAI LTM Benchmark has always been to serve as an objective measure for our progress in the development of agents capable of continual and life-long learning.

Read more

Note: This post is part of a series of blogposts on the LTM benchmark. In the first post we outline our motivation for the benchmark, and in the next post we describe the current results. At GoodAI, we are committed to.

Read more

As part of our research efforts in continual learning, we are open-sourcing Charlie Mnemonic, the first personal assistant (LLM agent) equipped with Long-Term Memory (LTM).

Read more

As part of our research efforts in the area of continual learning, we are open-sourcing a benchmark for testing agents’ ability to perform tasks involving the advanced use of the memory over very long conversations.

Read moreAre you keen on making a meaningful impact? Interested in joining the GoodAI team?